A Five-Point Security Checklist for the Multi-Agent Future

AI agents are moving fast and security is scrambling to catch up. This post breaks down why static policies aren't enough, and gives security leaders a checklist to get ahead of the exposure.

Published on

The cybersecurity industry is having a collective epiphany about AI agent security right now. And the punchline is something defenders figured out years ago.

Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025. Google Research, DeepMind, and MIT published a controlled evaluation of 180 agent configurations showing 80.8% performance gains with centralized coordination on parallelizable tasks. Anthropic’s MCP and Google’s A2A are standardizing how agents talk to each other. The adoption curve is vertical.

The security conversation is predictably trailing behind it.

The threat model nobody’s scoping correctly

Here’s what multi-agent deployment actually looks like. Twenty agents with cross-system access to your CRM, code repos, cloud infra, and databases. One gets compromised via prompt injection. Lateral movement becomes real. Not theoretical. Real.

The early research confirms it.

| 97% | 65% | 80–90% |

|---|---|---|

| Probability of executing arbitrary malicious code via malicious local file ”MAS Execute Arbitrary Malicious Code,” COLM 2025 — Magentic-One + GPT-4o | Success rate for local file-based data exfiltration of private user data ”MAS Execute Arbitrary Malicious Code,” COLM 2025 — CrewAI + GPT-4o | Attack campaign executed autonomously by AI agent with minimal human intervention Anthropic GTG-1002 disclosure, Nov 2025 — first documented AI-orchestrated cyberattack |

These aren’t theoretical attack models. These are measured outcomes from production-grade agent frameworks and, in the case of GTG-1002, a real-world espionage campaign against roughly 30 global targets. Agentic AI security isn’t a future problem. It’s a current one.

Container isolation isn’t the answer. Agents aren’t traditional workloads. Their behavior is driven by LLMs, which means it’s inherently unpredictable. They dynamically request new permissions, communicate with arbitrary services, and can be socially engineered through prompt injection. You can’t firewall your way out of that.

Two gaps, not one

The industry response so far focuses on the access control layer. FINOS published a multi-agent isolation framework. Cisco is extending ZTNA to agents. Microsoft launched Entra Agent ID for non-human identity management. CSA’s Agentic Trust Framework argues that agent autonomy should be earned through demonstrated performance, not granted by default. The OWASP Top 10 for Agentic Applications now catalogs the highest-impact vulnerabilities. All good work.

But it only addresses half the problem. Limiting permissions and isolating agents so they can’t cross boundaries is necessary. Monitoring what agents are actually doing at execution time is equally necessary. A single action might look fine. A sequence of actions can reveal an attack pattern.

The access control layer gets most of the attention. The behavioral observation layer barely exists yet.

And the blind spot isn’t where most people think. Server-side agents have ops teams and infrastructure controls around them. User-side agents are the real exposure. They run on devices that enterprises can barely govern, and they become pivot points into backend systems. If you can’t see what an agent is doing at the API level before it reaches external services, you have a gap that no amount of policy will close.

This is the same lesson vulnerability management keeps teaching

We spent two decades building vulnerability management programs around static snapshots. Scan the environment, count the CVEs, rank by CVSS, patch in priority order. The model assumes you can see everything that matters by looking at what’s installed rather than what’s executing.

It was never true. A BIOS vulnerability with a critical CVSS score that requires physical local access is functionally irrelevant. A medium-severity library that’s loaded into memory, listening on a network port, and running with elevated privileges is an emergency. The score didn’t tell you that. Runtime context did.

The agent security conversation is arriving at the same conclusion from a different direction. You can write all the policies you want about what an agent is allowed to do. You can isolate it in a container and restrict its permissions. But if you can’t see what it’s actually doing at execution time, you’re guessing.

What I’d be doing right now

If you’ve run a vulnerability management program, you already have the muscle memory for what comes next. The agent security problem follows the same arc. Here’s what I’d be doing right now if I were still sitting in the CISO chair.

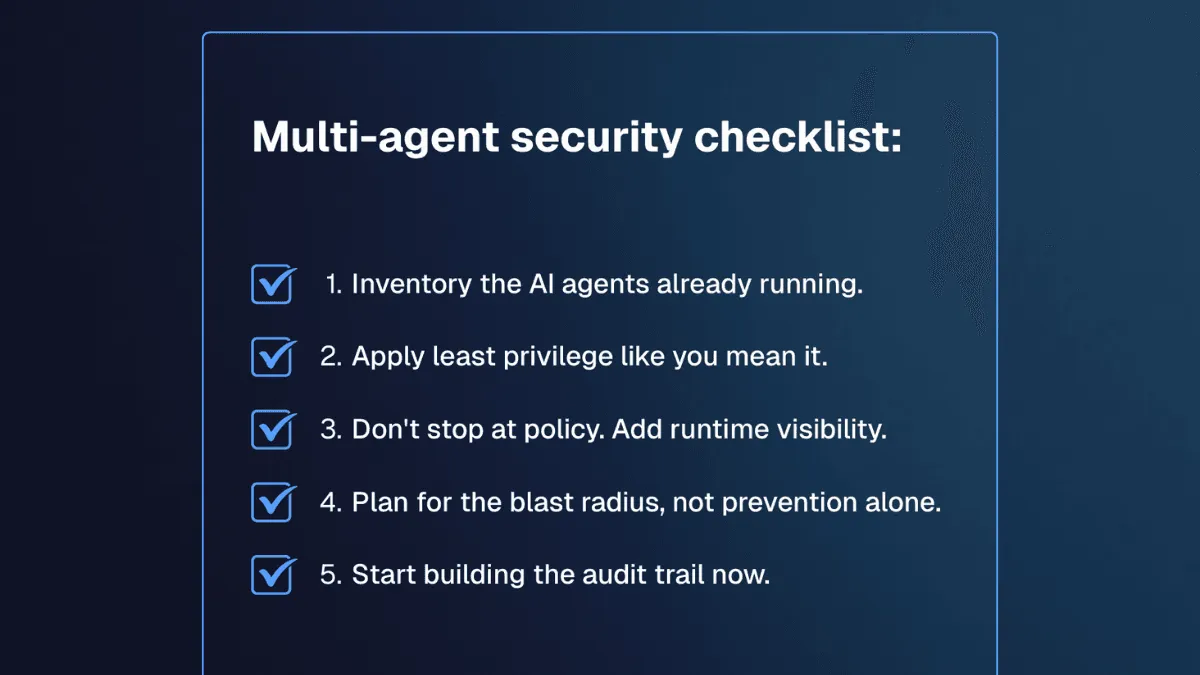

1. Inventory what’s already running. Most organizations I talk to can’t answer the question “how many agents have access to production systems?” with any confidence. Shadow AI is the new shadow IT. Developers are spinning up agents with API keys and broad permissions, and security teams don’t have visibility into it. Before you can secure agents, you need to know they exist. Treat this like an asset discovery problem, because it is one.

2. Apply least privilege like you mean it. CSA’s Agentic Trust Framework gets this right. Agent autonomy should be earned, not granted. Start agents with minimal permissions and expand based on demonstrated behavior. If you ran a PAM program, you know the drill. The difference is that agents request permissions dynamically, so you need policy enforcement that operates in real time, not through quarterly access reviews.

3. Don’t stop at policy. Add runtime visibility. This is where vulnerability management’s biggest lesson applies directly. We spent years writing policies about patch SLAs and risk acceptance thresholds. None of it mattered if you couldn’t see what was actually running in your environment. Same principle here. You can define what an agent is allowed to do. But you also need visibility into what it’s actually doing at execution time. What API calls is it making? What network connections is it opening? What privileges is it operating with? Runtime visibility is the layer that turns policy from a compliance artifact into an operational control.

4. Plan for the blast radius, not prevention alone. Agents will get compromised. Prompt injection is too effective and too easy to deploy for that not to happen. The question is whether a compromised agent can pivot laterally into your CRM, your code repos, your cloud infra. Microsegmentation helps. Identity-driven access helps. But the real test is whether you can detect anomalous behavior in the sequence of actions an agent takes and cut it off before the damage spreads. That’s a detection and response problem, not an access control problem.

5. Start building the audit trail now. The EU AI Act’s high-risk provisions hit in August 2026. That’s five months away. Multi-purpose agents are presumed high-risk by default, which means organizations will need to demonstrate they can audit and explain agent behavior. If your current approach is static policies and point-in-time snapshots, that’s going to be a painful conversation with your auditors. Continuous runtime context is the foundation of any defensible compliance posture: what was this agent doing, when, with what permissions, and what did it access.

This is a runtime problem

The agent security space will mature. The frameworks being proposed today (FINOS, CSA MAESTRO, Microsoft Entra Agent ID, OWASP ASI Top 10) are a necessary foundation. But the teams deploying agents at scale will hit the same wall defenders have been hitting for years in vulnerability management.

You can’t secure what you can’t observe at execution time.

Agents make that gap more visible because you literally cannot predict what they’ll do from their configuration alone. The only way to know is to watch what’s happening at runtime.

That’s the problem we’re solving at Spektion. Runtime exposure data that shows you what’s actually executing, not what a scan or a policy says should be happening. The technology applies whether the workload is a traditional server process or an AI agent making API calls. I’ll spare you the full pitch.

The multi-agent future has a security problem. The answer was always runtime.

---

Ready to secure AI agents on your endpoints? Reach out.